In my role as a Senior DR Engineer at a major hospitality enterprise, I inherited responsibility for disaster recovery across 26 Kafka and ZooKeeper clusters spanning 4 AWS accounts. The clusters were critical infrastructure — real-time messaging backbones handling reservation data, loyalty transactions, and property management events. DR failovers for these clusters were entirely manual, and every exercise was a stressful, error-prone marathon.

Here's the thing: I didn't know Kafka. I didn't know ZooKeeper. I'd never configured either one. What I did know was AWS infrastructure, disaster recovery patterns, and how to describe a problem clearly. That last part turned out to be the skill that mattered most.

The Problem: Manual DR Is a Nightmare at Scale

Every DR failover exercise meant manually cycling 26 clusters. Each cluster had a 3-node ZooKeeper ensemble managed by Exhibitor, a 3-node Kafka broker fleet, Auto Scaling Groups, Route53 DNS records, and SSM-based health checks that all had to be orchestrated in the right order.

ZooKeeper has to come up first and form a quorum before Kafka brokers can start. DNS records need to be updated. Health verification requires sending four-letter commands to ZooKeeper, checking Exhibitor's API, and validating Kafka broker registration. Getting any of this wrong can leave a cluster in a split-brain state or silently drop messages.

Doing this manually across 26 clusters took an entire day. It was exhausting, error-prone, and scaled terribly. If we ever had an actual disaster — not just an exercise — the time pressure would make manual execution even riskier.

I needed automation. But building a tool to orchestrate Kafka and ZooKeeper DR operations from scratch, in a technology stack I'd never worked with, felt like a project that would take months if I approached it traditionally.

The Approach: Describe the Problem, Let AI Write the Code

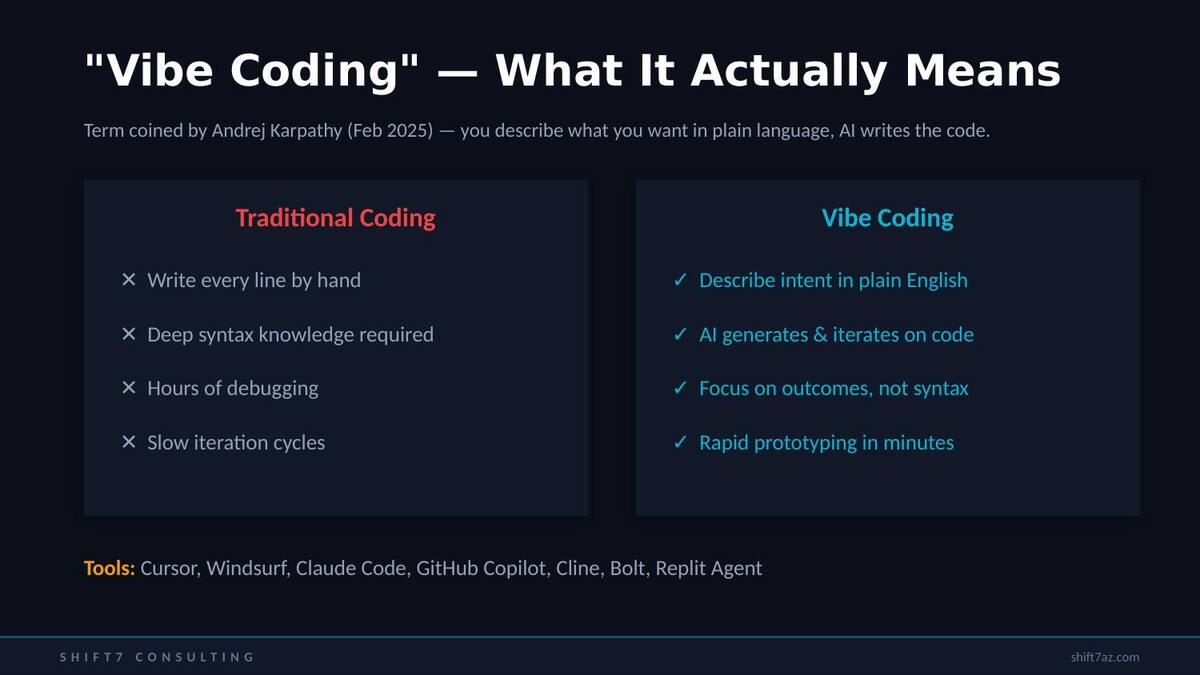

This project happened in late 2024, right as AI coding tools were maturing from novelty into genuine productivity multipliers. I was already using Claude for everyday tasks — troubleshooting, script generation, documentation. But the Kafka DR Manager was the first time I committed to what Andrej Karpathy would later call "vibe coding" for a serious, production-grade tool.

The workflow looked like this: I would describe what I needed in plain English — the operational goal, the AWS services involved, the constraints — and iterate with AI to generate the Python code. I couldn't review the Kafka-specific logic from expertise, but I could review the AWS API calls, the error handling patterns, the SSM command structures, and the overall orchestration flow. That was enough.

Vibe coding doesn't mean abandoning expertise. It means applying the expertise you have — infrastructure patterns, operational knowledge, architectural judgment — and letting AI fill in the domain-specific gaps. I knew how ASGs work, how SSM runs commands, how Route53 updates DNS. I didn't know ZooKeeper's four-letter commands or Exhibitor's API. AI handled that part.

Every conversation with the AI followed the same pattern: I'd describe the operational scenario ("what should happen when two ZooKeeper nodes are healthy but one is down and not recovering"), the AI would generate the detection and remediation logic, and I'd test it against real infrastructure. When something didn't work, I'd paste the error output back and iterate.

What We Built: The Kafka DR Manager

The result was a Python CLI tool — the Kafka DR Manager — that grew from a simple start/stop script to a comprehensive DR automation platform over about 15 iterative versions. By v15.12, it supported a full suite of operations:

# The core commands

kafka-dr-manager assess-and-remediate --cluster prod-kafka-01

kafka-dr-manager health-check --cluster prod-kafka-01

kafka-dr-manager cycle --cluster prod-kafka-01

kafka-dr-manager zk-quorum-check --cluster prod-kafka-01

kafka-dr-manager dns-check --cluster prod-kafka-01The assess-and-remediate command was the crown jewel. It would evaluate the health of a cluster's ZooKeeper ensemble and Kafka brokers, diagnose the specific failure mode, and execute the correct remediation sequence — all without human intervention. It understood dependency ordering (ZooKeeper must be healthy before touching Kafka), handled partial failures (one node down vs. full ensemble loss), and used parallel execution via ThreadPoolExecutor to process multiple clusters concurrently.

The tool ran entirely through AWS Systems Manager — no SSH keys, no bastion hosts. Every operation was an SSM command sent to the instance: checking ZooKeeper status via four-letter commands, querying Exhibitor's REST API, restarting Kafka services, validating DNS records against Route53. The configuration was a simple JSON file mapping cluster names to their ASG names, DNS entries, ports, and AWS account profiles.

The Real Test: A DR Failover Goes Sideways

The automation worked beautifully in testing. Then came the real DR failover exercise — and it taught us more than any test ever could.

During a failover event in us-east-2, DNS and SSM services became unstable. Our automation — which depends completely on SSM for every operation — started producing non-deterministic results. Different clusters would fail with different errors on every run. Re-running the same operation would sometimes succeed and sometimes fail. It was impossible to tell whether we had a bug in our code or AWS was having a bad day.

When automation that normally works perfectly starts producing random, inconsistent results during an incident, the problem is almost certainly the underlying infrastructure — not your code. The correct response is to pause automation and fall back to manual operations, not to debug code under pressure. We fell back to manual and completed the exercise safely across all 26 clusters.

That incident led directly to v16 of the tool. Using the same vibe coding approach, I described the failure mode to AI and we built out four new capabilities:

Infrastructure Preflight Checks detect before you execute

Before running any operations, the tool now tests SSM, Route53, EC2, and Auto Scaling API health. If any dependency is degraded, it fails fast with a clear message instead of producing unreliable results.

Circuit Breaker Pattern fail safe, not fail weird

After 3 consecutive SSM failures, the tool pauses automatically and asks for confirmation before continuing. No more chasing ghosts during infrastructure incidents.

Health Timeline Logging incident forensics

Every infrastructure dependency check is logged with timestamps to a separate file. During post-incident review, you can see exactly when services degraded and how the tool responded.

Sequential Mode --sequential flag

A degraded mode that processes clusters one at a time with verbose logging, making it possible to isolate issues when parallel execution obscures the root cause.

The v16 upgrade — including all four features, testing, and the incident summary documentation — took a single afternoon. Without AI coding tools, it would have been a week or more of development.

Building a Test Lab (From Scratch, Knowing Nothing)

One of the most impressive applications of this approach was building the test environment itself. I needed a real Kafka and ZooKeeper ensemble in AWS to test the DR Manager against — actual EC2 instances, actual ASGs, actual Exhibitor and Kafka processes. Not mocks. Not Docker containers. Real infrastructure that mirrors production.

I described the requirements to Claude: "I need a 3-node ZooKeeper ensemble with Exhibitor and a 3-node Kafka cluster, deployed via CloudFormation, using the same ASG and Route53 patterns as our production environment. I know nothing about configuring ZooKeeper or Kafka — everything needs to be automated in the UserData scripts."

What came back was a complete CloudFormation template, deploy scripts, management tooling, and a config file that matched the exact format my DR Manager expected. ZooKeeper configured itself — proper zoo.cfg, myid assignment, ensemble formation, four-letter commands enabled. Kafka auto-discovered the ZooKeeper ensemble and joined the cluster. I literally deployed and tested the same day.

Did it work perfectly on the first try? No. We hit issues — missing myid files, incomplete zoo.cfg entries, ensemble formation timing. But every problem was solved the same way: describe the error, get the fix, test again. The debugging loop that used to take days of reading documentation for an unfamiliar technology collapsed into minutes.

What This Taught Me About Vibe Coding

After building and battle-testing this tool across 16 major versions, here's what I actually believe about vibe coding as a serious approach for infrastructure work:

It's not about not knowing how to code. I can write Python. I can write Bash. What vibe coding gave me was the ability to build in a domain I didn't know — Kafka internals, ZooKeeper quorum mechanics, Exhibitor's API — and focus on what I did know: AWS infrastructure patterns, DR operations, and how things fail in production.

The human in the loop is non-negotiable. AI generated the Kafka-specific logic. But I reviewed every ASG operation, every SSM command, every DNS update. I caught issues that AI missed — race conditions in parallel execution, edge cases in ASG scaling, timeout values that were too aggressive. The tool worked because of the combination.

The iteration speed is the real superpower. Going from "here's what went wrong in the DR exercise" to "here are four new features that prevent it" in an afternoon isn't possible with traditional development when you're in an unfamiliar domain. That speed of iteration is what transforms vibe coding from a toy into a genuine engineering tool.

Real infrastructure testing matters more than ever. When AI is writing code in a domain you don't fully understand, you need to test against real systems, not just read the code and convince yourself it looks right. Building the test ensemble was just as important as building the tool itself.

The Bottom Line

A DR engineer with zero Kafka experience built a production automation tool that manages disaster recovery across 26 clusters in 4 AWS accounts. The tool has been through a real incident, evolved through 16 versions, and handles operations that used to take an entire day in minutes.

That's not a story about AI replacing engineers. It's a story about AI amplifying an engineer's existing expertise into domains they couldn't have reached alone — at a speed that wouldn't have been possible any other way.

If you're an IT infrastructure professional sitting on a manual process that's painful, error-prone, and doesn't scale — this is exactly the kind of problem vibe coding was made for. Start with the problem you know. Describe it clearly. And let AI help you build the solution.