Files are encrypted. There's a ransom note on the domain controller. People are panicking. Phones are ringing. Nobody knows what to do first.

This is the guide for that moment. Step by step — what to do, what NOT to do, who to call, and how to get through it. Everything here is based on real-world incident response, not theory.

The First 15 Minutes

Everything you do in the next 15 minutes determines how the next 24 days go. The average ransomware recovery takes 24 days. Your actions right now decide whether you're on the short end or the long end of that average.

What NOT to Do

Under pressure, people do the wrong thing fast. Here are the six mistakes that make a ransomware incident worse:

Hour 1: Contain & Assess

The initial 15 minutes are past. Now you contain and assess systematically:

- Confirm isolation is holding. No new systems should be getting encrypted. Monitor for C2 callbacks and lateral movement.

- Activate the full incident response team. IC, Security Lead, VMware Admin, Network Admin, Legal, Insurance contact, Comms lead. Open a dedicated bridge call.

- Assess the blast radius. How many systems? Which tiers? Which business functions are down?

- Check backup infrastructure status. Is the backup server compromised? Is the hardened repo intact? Can you access immutable copies?

- Establish the timeline. When was the first indicator? What was the attack vector? How long has the attacker been inside? This determines your clean RPO.

- Notify your cyber insurance provider. Most policies require notification within 24-72 hours. They'll assign an IR firm, legal counsel, and potentially a negotiator.

The most critical assessment: check your backup infrastructure. If the Veeam console shows a ransom note too, and you don't have a hardened Linux repo or S3 Object Lock — the room goes very quiet. That silence is the difference between "we recover from backups" and "we're discussing the ransom."

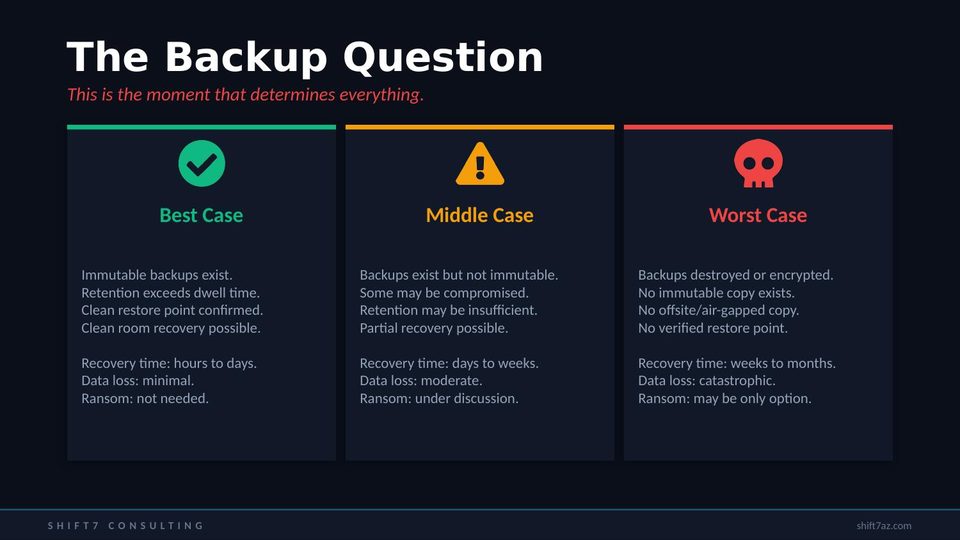

The Backup Question

This is the moment that determines everything. Three possible outcomes based on one architectural decision you made months ago:

Best Case

Immutable backups exist. Retention exceeds the attacker's dwell time. Clean restore point confirmed. Clean room recovery is possible.

Recovery: hours to days.

Data loss: minimal.

Ransom: not needed.

Middle Case

Backups exist but aren't immutable. Some may be compromised. Retention may be insufficient. Partial recovery possible.

Recovery: days to weeks.

Data loss: moderate.

Ransom: under discussion.

Worst Case

Backups destroyed or encrypted. No immutable copy. No offsite/air-gapped copy. No verified restore point.

Recovery: weeks to months.

Data loss: catastrophic.

Ransom: may be only option.

The difference between these three columns is one thing: immutable backups with sufficient retention. That single architectural decision — made weeks or months before this moment — determines which column you're in right now.

Clean Room Recovery

Never restore directly to production. The attacker may have planted persistence mechanisms — backdoors, scheduled tasks, compromised service accounts — that survive a simple restore. You need a clean room.

- Build an isolated recovery environment. Separate VLAN, no connectivity to production. Fresh vCenter. Fresh AD forest. No domain trust.

- Verify backup integrity before restore. Check immutability timestamps. Verify no encryption reached the backup chain. Validate checksums.

- Restore to the clean room first. Power on. Let the IR team scan for IOCs, persistence mechanisms, and backdoors.

- Validate application functionality in isolation. Smoke tests. Data integrity checks. Confirm no ransomware artifacts.

- Harden before reconnecting. Reset ALL credentials — every password, every service account, every API key. Patch the exploited vulnerability. Enable MFA. Segment the network. THEN connect to production.

- Monitor aggressively for 30+ days. EDR on every endpoint. 24/7 SOC monitoring. 69% of organizations that paid ransom were attacked again. The attacker may try to come back.

The Ransom Decision

This is a business decision, not a technical one. The IC decides with legal and insurance input. Here are the factors:

Arguments against paying: you have verified backups and can recover; 69% of payers were hit again; payment funds criminal operations; no guarantee the attacker deletes exfiltrated data; no guarantee decryption keys work; average payment is $1M and you still spend $1.53M on recovery anyway.

Factors that complicate: no usable backups exist; the business will cease without the data; exfiltrated data creates existential legal risk; lives are at risk (healthcare, critical infrastructure); insurance recommends payment; rebuild cost far exceeds ransom.

Which column you're in was determined by the backup architecture decisions you made months ago. The organizations in the "don't pay" column invested in immutability. The organizations in the "may have to pay" column didn't.

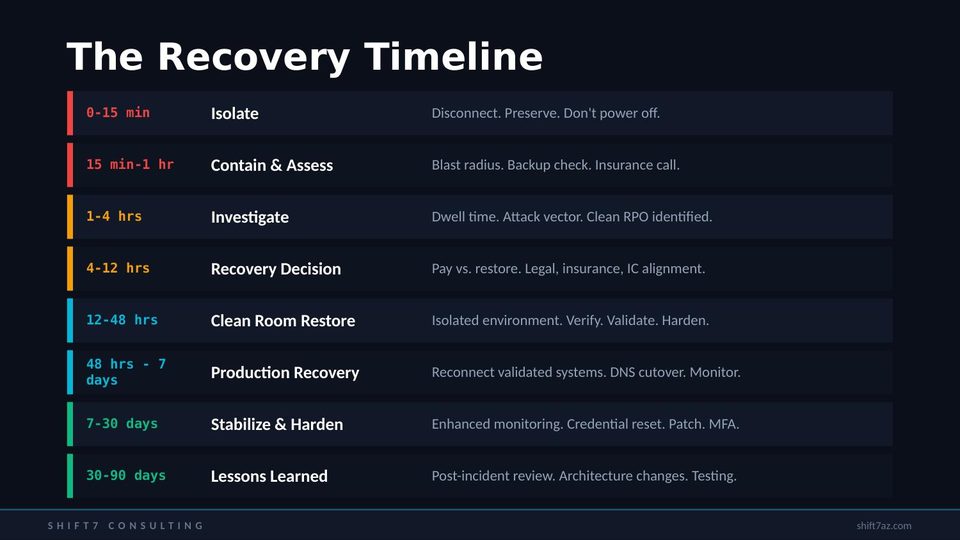

The Recovery Timeline

Here's the realistic timeline from detection to full recovery:

This is the realistic timeline. Not the vendor marketing timeline. Twenty-four days average for a reason.

How to Never Be Here Again

Six things that prevent you from ever being in this situation again:

- Implement 3-2-1-1-0 backups. Three copies, two media, one offsite, one immutable, zero errors. The immutable copy is the one that saves you. Hardened Linux repo, S3 Object Lock, or tape offsite.

- Segment backup credentials. If your backup admin accounts are in Active Directory, an attacker with domain admin can delete every backup. Use local accounts or a separate auth system.

- Enable MFA on everything. VPN, vCenter, email, backup console, cloud accounts. MFA blocks 82% of credential-based attacks for under $10K/year.

- Run ransomware tabletop exercises. Quarterly. With the full team. The team that's practiced doesn't panic.

- Test your immutable backups monthly. Try to delete a test backup from the immutable repo. It should fail. If it succeeds, fix it immediately.

- Assume breach. Plan accordingly. Zero trust. Network segmentation. Least privilege. The question isn't whether you'll be attacked — it's whether you'll survive it.

The best time to prepare was six months ago. The second best time is right now. Every item on this list can be started today. Immutable backups can be configured this week. MFA can be enabled this afternoon. A tabletop can be scheduled for next month. Don't wait for the worst day.

Watch the Video

Don't Wait for the Worst Day

Shift7 Consulting offers DR and cyber resilience assessments. We'll tell you exactly where your gaps are — including your immutability posture — before an attacker finds them for you.

Request a Cyber Resilience Assessment