If you're an IT professional in 2026 and you feel overwhelmed by the AI landscape, you're not alone. New tools launch every week. The buzzwords multiply faster than you can Google them. And everyone from your CEO to your helpdesk analyst has an opinion about what you should be doing with AI.

Here's the good news: you don't need to master all of it. You need to understand enough to make smart decisions, pick the right tools for your environment, and start getting real value from AI in your daily work. That's what this guide is for.

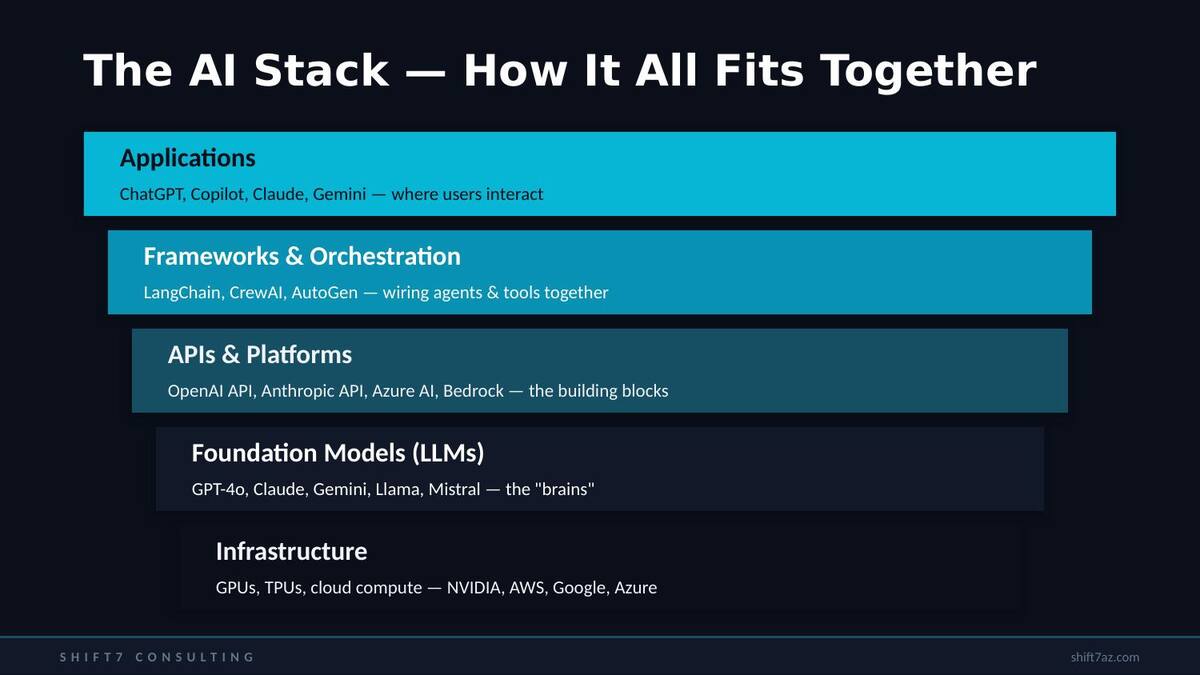

The AI Stack — How the Pieces Fit Together

Think about AI the same way you think about your infrastructure stack. There are layers, and understanding them helps you know where different tools fit.

Infrastructure sits at the bottom — the GPUs, TPUs, and cloud compute from NVIDIA, AWS, Google, and Azure that make everything else possible. On top of that are the foundation models, the large language models (LLMs) like GPT-4o, Claude, Gemini, Llama, and Mistral — the "brains" that do the actual language processing.

Above the models sits the API and platform layer — OpenAI's API, Anthropic's API, Azure AI, AWS Bedrock. This is how developers and tools access the models programmatically. Then frameworks and orchestration tools like LangChain, CrewAI, and AutoGen wire multiple AI capabilities together. And at the top are the applications you actually interact with — ChatGPT, Copilot, Claude, Gemini.

As infrastructure professionals, we operate across all of these layers. Knowing the stack helps you understand what you're actually buying, building, or integrating when someone says "we need AI."

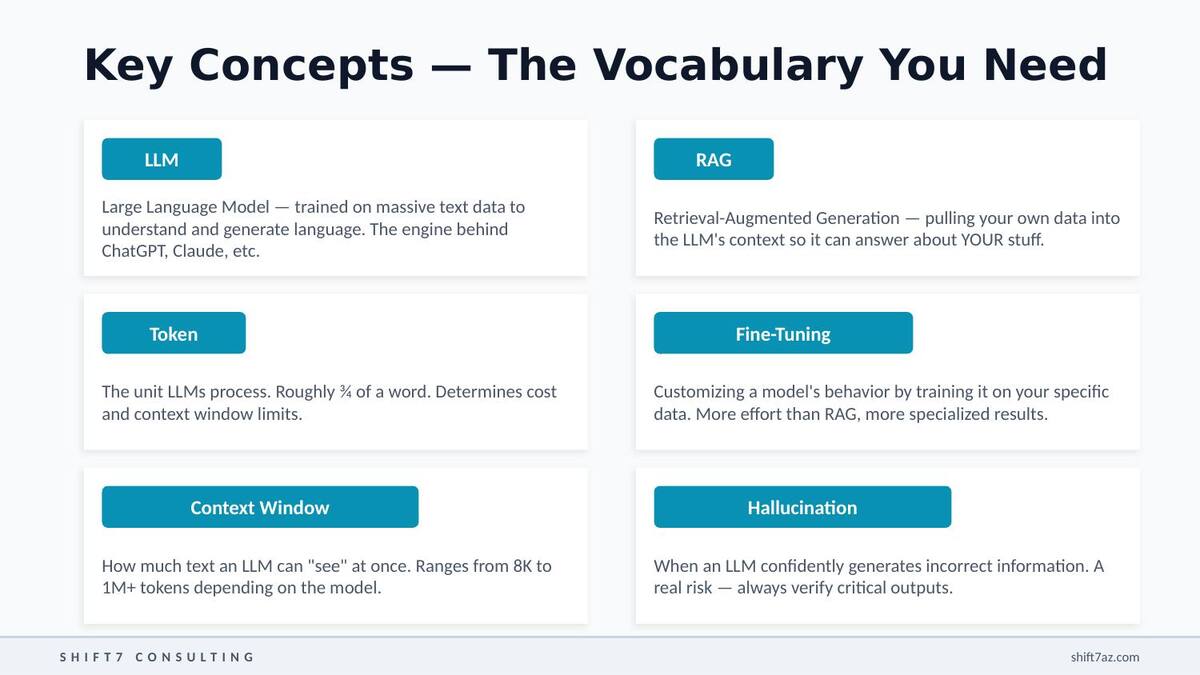

The Vocabulary You Need

Six terms come up constantly in any AI conversation. Here's what they actually mean in plain language.

Large Language Model — the core technology behind modern AI assistants. Trained on massive text data to understand and generate human language. GPT-4, Claude, and Gemini are all LLMs.

The unit that LLMs process — roughly three-quarters of a word. Matters because pricing is per-token and context windows are measured in tokens. When someone says "200K context," they mean ~150,000 words.

How much text an LLM can "see" at once. Ranges from 8,000 tokens in older models to over a million in newer ones. Bigger windows mean you can feed in entire codebases or long documents.

Retrieval-Augmented Generation — the most common way to get an LLM to work with YOUR data. You pull relevant documents into the model's context at query time. Fastest path from "generic AI" to "AI that knows about our environment."

Customizing a model's behavior by training it further on your specific data. More effort than RAG, but more specialized results. Most orgs start with RAG first.

When an LLM confidently generates incorrect information. The single biggest risk you need to manage — models predict likely text, and sometimes that prediction is wrong. Always verify critical outputs.

The Major Players

The AI landscape has a handful of dominant players, each with different strengths worth understanding.

OpenAI (GPT-4o, o1, o3) has the largest ecosystem and broadest tool integration. They power both ChatGPT and Microsoft Copilot. If you use Microsoft 365, you're already in their orbit.

Anthropic (Claude Opus, Sonnet, Haiku) has built a reputation for strong reasoning, very large context windows, and a focus on safety and reliability. Particularly popular for enterprise work and coding tasks.

Google (Gemini Pro, Ultra, Flash) offers deep integration with Google Cloud and Workspace. If your organization is a Google shop, Gemini deserves serious evaluation.

Meta (Llama 3, Llama 4) drives the open-weight movement. Their models are free to download and run on your own hardware — a game-changer for data sovereignty and air-gapped environments.

Mistral, Qwen, DeepSeek, and others make up a growing tier of smaller but competitive models that are often more efficient and surprisingly capable for specific tasks.

Don't pick a vendor and become a loyalist. The landscape shifts monthly. Focus on capabilities, evaluate regularly, and keep your architecture flexible enough to switch models when the math changes.

Open-Weight vs. Closed-Source

One of the most consequential decisions you'll face is whether to use closed-source API models, self-hosted open-weight models, or both.

Closed-source models (GPT-4o, Claude, Gemini) give you the best raw performance with zero infrastructure to manage. The trade-offs: your data goes to a third-party service, you pay per token, and there's real vendor lock-in risk.

Open-weight models (Llama, Mistral, Qwen) are free to download and run on your own hardware. Full data sovereignty, air-gapped capability, no per-token costs. The trade-offs: you need GPU infrastructure and own all the ops overhead.

In practice, most organizations will use both — API models for general-purpose tasks where performance matters most, self-hosted models for sensitive workloads where data can't leave the network. If that sounds like the hybrid cloud model you already know, that's because it is. Same playbook, different technology.

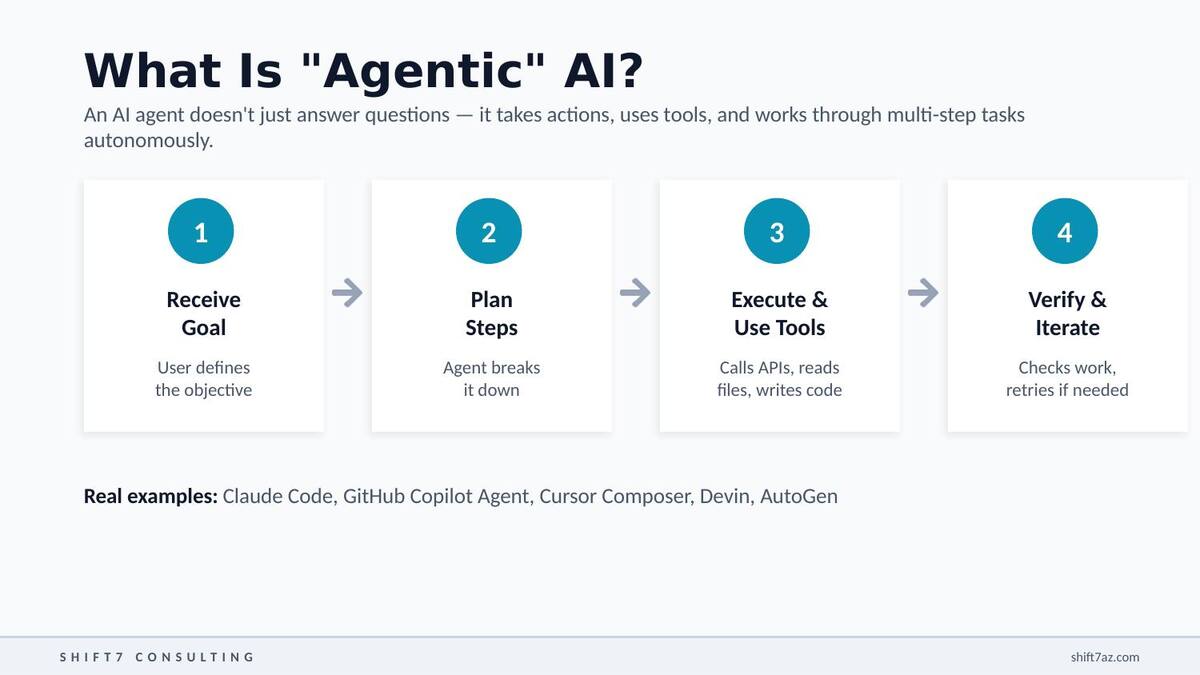

What Is "Agentic" AI?

A traditional AI interaction is simple: you ask a question, you get an answer. An AI agent is fundamentally different. You give it a goal, and it autonomously plans the steps to achieve it — executing those steps using tools, calling APIs, reading files, writing code — then verifying its own work and iterating if something didn't work right.

Think of it as the difference between asking someone for directions versus hiring someone to drive you there.

For IT infrastructure professionals, this means you can describe a complex task — "audit this CloudFormation template for security issues and generate a remediation plan" — and the agent will work through it step by step, rather than just giving you a text answer.

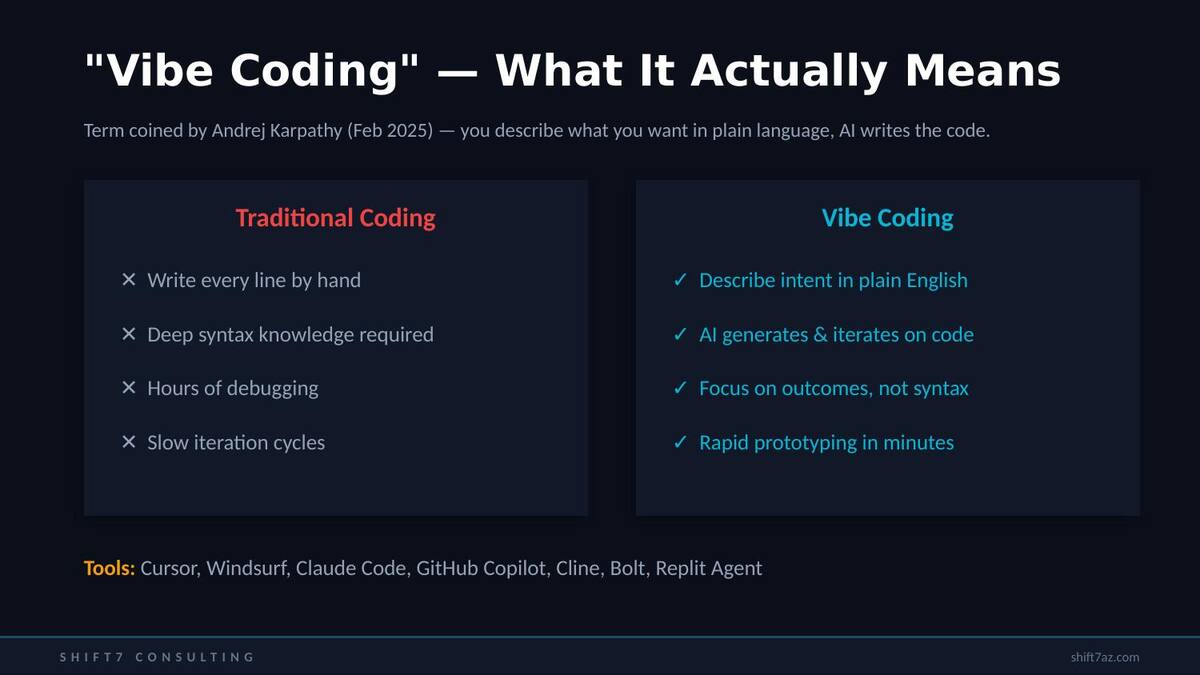

"Vibe Coding" — What It Actually Means

Vibe coding is a term coined by Andrej Karpathy — former OpenAI research lead and Tesla's head of AI — in February 2025. The concept is simple: instead of writing code line by line, you describe what you want in plain language and let AI generate the code. You focus on the outcome rather than the syntax.

This doesn't mean coding knowledge is irrelevant. For production systems, you absolutely need to review, test, and understand what the AI generates. But for scripts, prototypes, internal tools, and automation — a huge chunk of what IT pros do daily — vibe coding is a massive productivity multiplier.

The tools fall into four categories:

IDE Copilots

Real-time code suggestions inside your editor. The easiest entry point.

CLI Agents

Terminal-based agents that read your codebase and make autonomous changes.

Full-Stack Builders

Describe an app, get a working prototype. Incredible for internal tools.

Platform-Specific

Tuned for particular cloud and enterprise ecosystems.

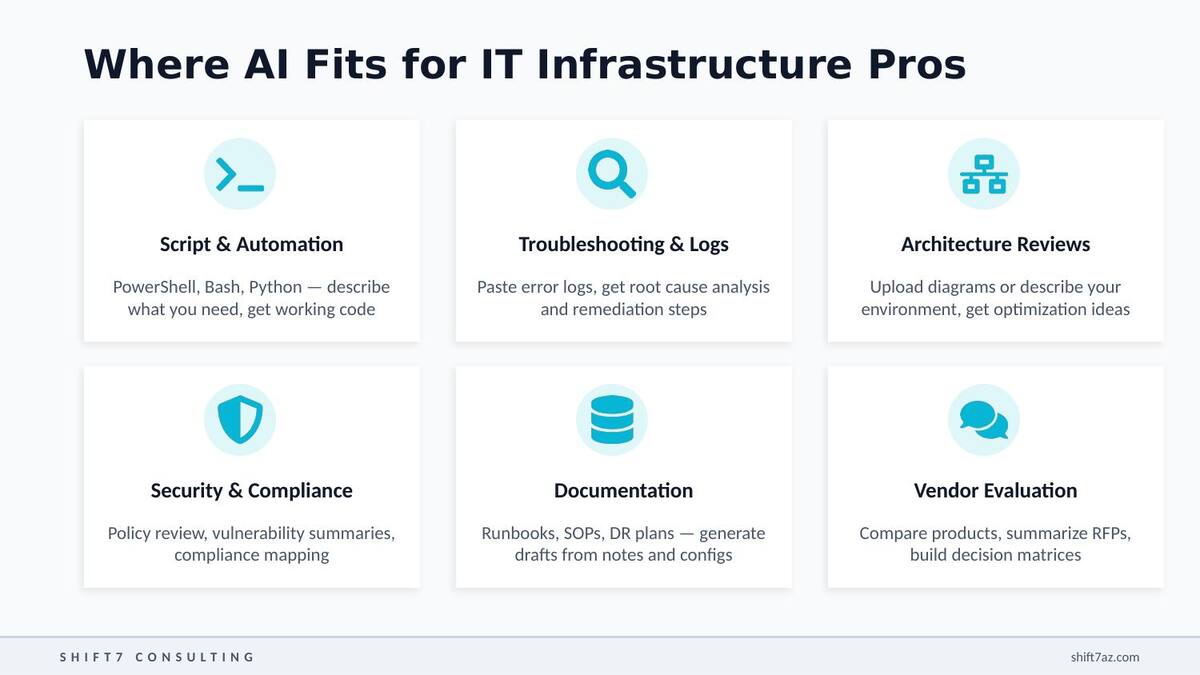

Where AI Fits for Infrastructure Pros

Here are the six highest-impact use cases I've found in my own work — each one saves hours per week once you've built the workflow.

Script & Automation Generation — describe what you need in plain English, get working PowerShell, Bash, or Python in seconds. I've used this for everything from S3 migration scripts to DR runbook automation.

Troubleshooting & Log Analysis — paste a stack trace, error log, or config dump and get root cause analysis with specific remediation steps.

Architecture & Design Reviews — describe your environment or share a diagram and get feedback on optimization, cost, and resilience. It won't replace a senior architect's judgment, but it's an excellent second pair of eyes.

Security & Compliance — policy review, vulnerability assessment summaries, mapping controls to frameworks like SOC 2 or HIPAA.

Documentation Generation — runbooks, SOPs, DR plans, migration guides. Solid first drafts from your notes and configs. This alone can save hours every week.

Vendor Evaluation — building comparison matrices, summarizing RFP responses, creating structured decision frameworks.

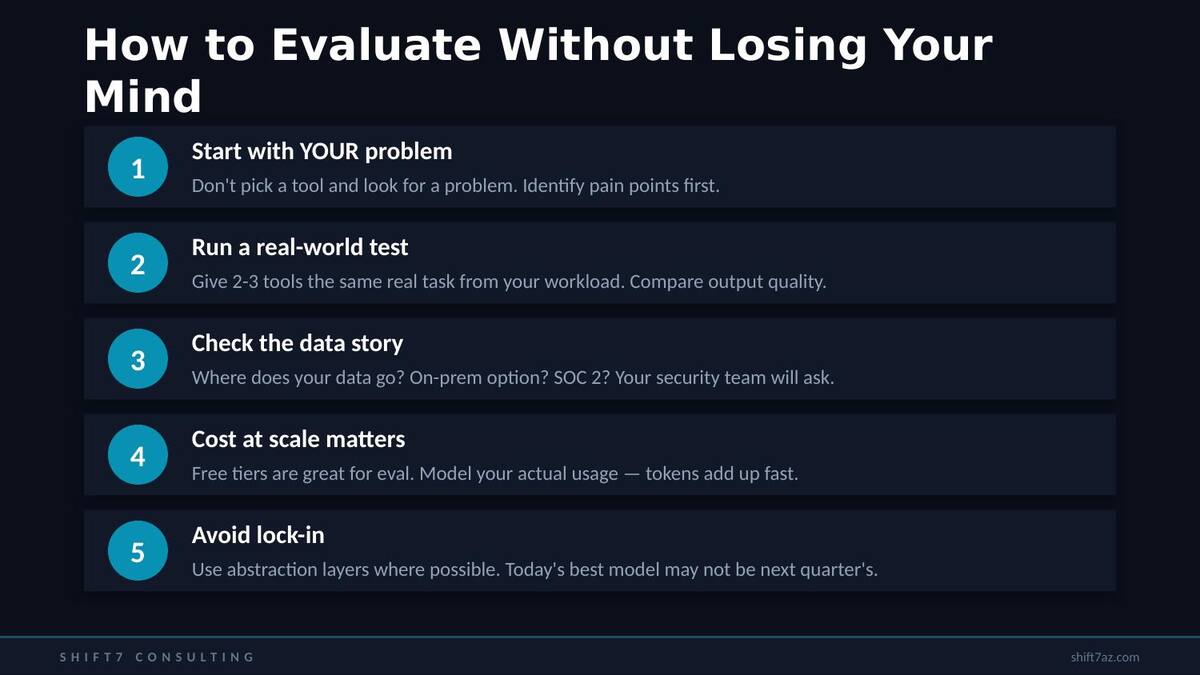

How to Evaluate Without Losing Your Mind

Start with YOUR problem

Don't pick a tool and look for a use case. Identify your pain points first — what's eating your time right now?

Run a real-world test

Take an actual task from your backlog and give it to 2-3 tools. Compare outputs on your work, not demo problems.

Check the data story

Where does your data go? On-prem option? SOC 2? Your security team will ask — have the answers ready.

Model cost at scale

Free tiers are great for eval. But model your actual team usage — tokens add up fast once everyone's on board.

Avoid lock-in

Use abstraction layers where possible. Today's best model may not be next quarter's. Keep your options open.

Pitfalls to Avoid

Trusting AI output blindly. AI is a powerful assistant, not an infallible expert. In infrastructure work, a subtly wrong config or security recommendation can cause real damage. Always review, always test.

Trying to boil the ocean. Don't adopt everything at once. Pick one use case, get genuinely good at it, build the muscle memory, then expand.

Ignoring data privacy. Pasting sensitive configs, credentials, or PII into public AI tools is a real risk. Know your org's acceptable use policy. Use enterprise tiers or self-hosted models for sensitive data.

Thinking AI replaces expertise. It amplifies it. A senior engineer with AI becomes dramatically more productive. AI without human oversight produces mediocre or dangerous results. The human in the loop is non-negotiable.

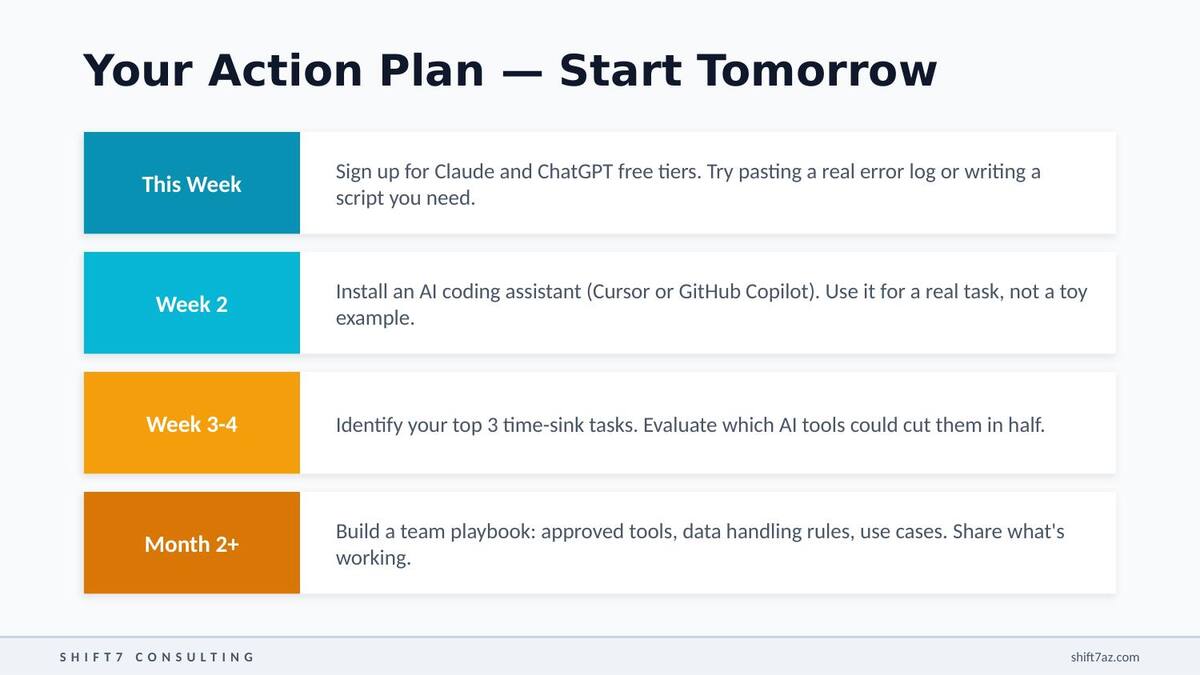

Your Action Plan

Here's how to get started — broken into phases you can actually execute.

Sign up for Claude and ChatGPT free tiers. Give them a real task — paste an actual error log, write a script you actually need. Don't start with toy examples.

Install an AI coding assistant — Cursor or GitHub Copilot. Use it on a real task in your actual codebase: a Terraform module, a Python script, a monitoring dashboard.

Identify the three tasks that consume the most time every week. Evaluate which AI tools could cut each of them in half.

Build a team playbook: approved tools, data handling rules, proven use cases. Turn individual productivity gains into team-wide improvements.

The Bottom Line

You don't need to master the entire AI landscape. Nobody can — it moves too fast. What you need is to start with one real problem and one good tool. Get comfortable with the workflow. Build confidence. Then expand.

The IT professionals who thrive in the next few years won't be the ones who know the most about AI architecture. They'll be the ones who figured out how to use AI tools to solve real infrastructure problems faster and more reliably than they could before.

Start this week. You'll be surprised how quickly it becomes indispensable.